I’m somewhat chagrined to note that I made a major mistake in writing PROBABILITY ZERO and failed to notice that a paper had been recently published in Nature that would have had significant impact on how PROBABILITY ZERO was written. So much so, in fact, that it is necessary to revise the core MITTENS argument as well as revise the entire book and release a second edition.

Here is what happened, what it means, and why every honest reader of the first edition deserves to know that the standard model of evolution by natural selection is in even worse shape than the original calculations suggested.

The Number That Was Never Really 35 Million

For twenty years, the standard textbook claim has been that human and chimpanzee DNA is “98.8 percent identical.” That figure, repeated in every popular science article, every introductory biology textbook, and every “I fucking love science” tweet about how we are practically the same animal as a chimp, traces back to the 2005 Nature paper by the Chimpanzee Sequencing and Analysis Consortium. The headline number from that paper was approximately 35 million single nucleotide differences and 5 million indels affecting roughly 90 million base pairs of sequence. Forty million differences out of three billion base pairs. About 1.2 percent.

The first edition of PROBABILITY ZERO used these consensus figures because they were the consensus figures. The MITTENS framework demonstrates that the standard model fails by about 220,000-fold against the 35-40 million SNP target. That alone is a five-orders-of-magnitude failure. A theory that cannot account for 99.9995 percent of what it claims to explain is a theory that has lost its license to be called science.

But the 35 million figure was never the total observed divergence between the two genomes. It was only the divergence in the portion of the genomes that aligned cleanly to each other. The unalignable regions — sequence that is so different that no reasonable algorithm can map one species’ DNA onto the other’s coordinate system — were excluded from the difference count and quietly placed in supplementary tables where no journalist or undergraduate would ever read them.

This was not a methodological oversight. The 2005 paper aligned roughly 2.4 billion base pairs of the chimp genome to the human reference, out of a total chimp genome of approximately 3 billion. Six hundred million base pairs of unalignable sequence existed. The authors knew about it. But no one else did, and certainly no one really understood the significance of those unaligned sequences.

Yoo et al. 2025: The Numbers are Corrected

In April 2025, the Eichler lab at the University of Washington published the capstone of the telomere-to-telomere genome program: complete, gapless, diploid assemblies of all six great apes, at the same quality as the human reference. The paper has 122 authors. It has been cited 98 times in the eight months since publication. It is the most authoritative comparative ape genome paper in existence, and it will be for years to come. Yoo, D. et al., Complete sequencing of ape genomes, Nature 641, 401-418 (2025).

Here is the sentence that ends the standard divergence figure as a citable claim:

Overall, sequence comparisons among the complete ape genomes revealed greater divergence than previously estimated. Indeed, 12.5–27.3% of an ape genome failed to align or was inconsistent with a simple one-to-one alignment, thereby introducing gaps. Gap divergence showed a 5-fold to 15-fold difference in the number of affected megabases when compared to single-nucleotide variants.

The total structural divergence between human and ape genomes — including all insertions, deletions, duplications, inversions, rearrangements — affects between five and fifteen times more base pairs than the single nucleotide differences that everyone has been counting since 2005. The 35 million SNP figure was counting the smaller of two divergence categories and ignoring the larger one. And the gap range is not uncertainty, but rather, the different ranges between the closest-related apes and the least-related apes.

For the chimp-human comparison, the gap-divergence minimum is 12.5 percent. For the gorilla-human, it is 27.3 percent. The honest divergence figure for chimp-human is not 1.2 percent. It is somewhere between 12.5 and 14 percent of the genome, depending on which haplotypes you measure. Translated to base pairs: roughly 375 million additional base pairs of difference that the SNP count never captured, for a total genuine divergence of approximately 700 to 800 million base pairs between the two species.

That is not a refinement. That is an order of magnitude.

What This Does to the MITTENS Calculation

This makes the MITTENS argument considerably stronger. The probability of evolution by natural selection is now less than zero. The original MITTENS shortfall against the chimp-human gap was 220,000-fold. That number was computed against a requirement of 20 million fixations on the human lineage, which is half of the standard 40-million-difference figure.

Since the genuine chimp-human divergence is 415 million base pairs rather than 40 million, the requirement on the human lineage rises from 20 million fixations to roughly 207 million. A maximum of 91 fixations on the human lineage in the time available was the ceiling before, and it remains the ceiling now. The shortfall ratio rises from 220,000-fold to more than 2.3 million-fold against the chimp-human gap alone.

And every structural difference longer than a single base pair makes the problem mathematically worse, not better. A point mutation requires one mutation event and one fixation event. A 50,000 base pair insertion or a chromosomal inversion requires the entire structural rearrangement to occur as a single low-probability event and then to fix. Counting these by base pair, as the gap-divergence figure does, is generous to the standard model. Counting them by independent fixation events would be more devastating still.

The Yoo paper does not report this calculation. The Yoo paper reports the data and lets the reader draw the conclusion. The second edition of Probability Zero will draw the correct conclusions.

The Drift Defense Just Got Worse

Some defenders of the standard model, like Dennis McCarthy, retreated from from selection to drift. If natural selection cannot accomplish the work, perhaps neutral evolution and incomplete lineage sorting can carry the load.

This was already the weakest argument in the first edition’s bestiary of failed defenses. The first edition documents four independent reasons why incomplete lineage sorting cannot rescue the model: the quantitative ceiling on ancestral polymorphism, the demographic contradiction, the relocation rather than elimination of the fixation requirement, and the haplotype block bound. Each reason alone is sufficient to destroy the ILS defense.

Yoo et al. happen to claim, in the same paper, that incomplete lineage sorting accounts for 39.5 percent of the autosomal genome, and treat it as a vindication of the standard drift model. They are mistaken. The ILS objection collapses for the same four reasons documented in the first edition, and the second edition will engage Yoo specifically to demonstrate this. Their inflated ILS figure does not rescue anything. It simply distributes the fixation requirement across both lineages instead of consolidating it on one. Each lineage still has to do its share of the work, and each lineage still cannot.

But here is the larger problem for the drift defense, and it is the problem the second edition will press hard: the gap divergence is not the sort of variation that ILS can plausibly produce in the first place. ILS sorts ancestral polymorphisms into reciprocal fixation. A single nucleotide polymorphism in the ancestral population can sort one way in humans and another way in chimps. Fine. But a 4.8 megabase inverted transposition — like the one Yoo et al. document on gorilla chromosome 18 — is not a polymorphism that the ancestor was carrying around in heterozygous form for millions of years. It is a structural rearrangement that occurred in a specific lineage at a specific time, and either fixed or did not fix. ILS cannot sort what was never segregating. Structural variation is, with very few exceptions, post-divergence, and it must be accounted for by the same fixation arithmetic that the SNPs already break.

The defender of the standard model is now caught in a worse vise than before. Selection cannot accomplish 415 million base pairs of divergence in 6 to 9 million years. Drift would find it even harder to accomplish 415 million base pairs of divergence in 6 to 9 million years. Incomplete lineage sorting cannot account for the structural component of that divergence at all, and the SNP component it might address is still subject to the four-fold collapse already documented.

There is nowhere left to retreat to.

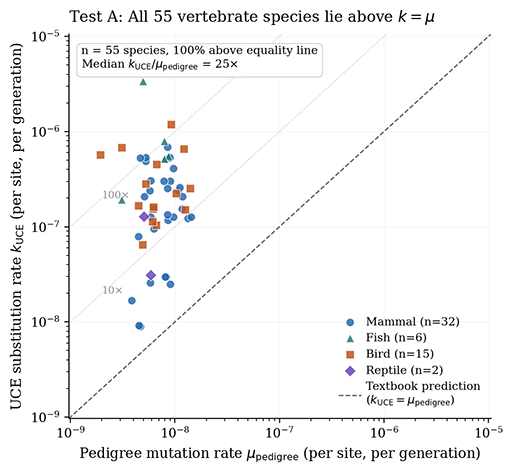

The Molecular Clock Was Already Broken

Long-time readers will know that the first edition led to a paper about the molecular clock — namely, that Kimura’s 1968 derivation of k = μ rests on an invalid cancellation between census N and effective N~e~ — which lead to a recalibration of the chimp-human divergence date from 6 to 7 million years to somewhere in the range of 200,000 to 400,000 years. That argument is fully developed in the Recalibrating CHLCA Divergence paper and will be incorporated into the second edition as a dedicated chapter.

What the Yoo paper adds to this picture is empirical confirmation that the standard molecular methods produce internally inconsistent results even on their own terms. Yoo et al. report ancestral effective population sizes of N~e~ = 198,000 for the human-chimp-bonobo ancestor and N~e~ = 132,000 for the human-chimp-gorilla ancestor. These figures are derived from incomplete lineage sorting modeling and from the molecular clock. They are an order of magnitude larger than any N~e~ estimate that has been derived from clock-independent methods, including the N~e~ = 3,300 we derive from ancient DNA drift variance and the N~e~ = 33,000 we derive from chimpanzee geographic drift variance.

The molecular clock estimates of N~e~ are inflated because the clock assumes k = μ. When k = μ is wrong — and it is wrong, by a factor of N divided by N~e~ — the N~e~ derived from genetic diversity absorbs the error. Yoo et al. cite the inflated number. The inflated number is what their methods can produce. Their methods cannot detect the error because the error is built into the methods.

For the second edition, this means the cascade gets cleaner. The N~e~ = 3,300 figure from ancient DNA, the N~e~ = 33,000 figure from chimpanzee subspecies drift, and the k = μ correction together yield a recalibrated chimp-human split of approximately 200 to 400 thousand years ago. At that recalibrated date, the MITTENS shortfall ratio rises from 2.3 million-fold (against the corrected divergence figure at the consensus clock date) to 40 million-fold (against the corrected divergence figure at the corrected clock date).

A theory off by a factor of 40 million is not a viable theory. It is a fairy tale.

What Goes Into the Second Edition

The second edition of PROBABILITY ZERO will include:

The corrected divergence figures throughout, citing Yoo et al. 2025 as the authoritative source. Every calculation that depended on the 35-40 million SNP count will be updated. The 1.2 percent figure will be addressed directly as a historical artifact of methodologically convenient bookkeeping, with the honest 12.5 percent figure replacing it.

A new chapter on what happens when you actually count the unalignable regions, including reproduction of the relevant gap-divergence table from Yoo’s Supplementary Figure III.12. The reader will be able to verify the source for themselves.

A dedicated chapter incorporating the N/N~e~ correction to Kimura’s substitution rate and the resulting recalibration of the chimp-human divergence date. This material previously existed as a separate working paper and will now be properly woven into the book’s main argument.

Updated MITTENS shortfall ratios reflecting both the corrected divergence figures and the recalibrated divergence date. The standard model fails by roughly 30 to 100 million-fold in the second edition, against 220,000-fold in the first.

A direct engagement with the Yoo et al. 2025 incomplete lineage sorting claim, demonstrating that the inflated ILS figure does not rescue the model and cannot in principle account for the structural divergence component.

A clarified treatment of the cascade: when the chimp-human divergence date moves, every primate divergence date calibrated against it moves with it. The hominoid slowdown is a calibration artifact. The deep evolutionary timescale of mammalian evolution depends on these calibrations. The second edition will trace these consequences explicitly.

A Note on How This Happened

The first edition was completed in late 2025. The Yoo paper was published in April 2025. The architecture of the book’s argument had been in place for six years by the time the paper was published and I wasn’t looking for revisions of the consensus numbers. I cited the 2005 consortium paper because it was the standard citation, and to my regret, I did not ever consider searching for a paper that might have been more recently published.

That is not an excuse. It is what happened. The first edition is what it is, and it is good — the argument stands at the figures used. But the second edition will be substantially better, and the argument it makes will be unanswerable in the same way the first edition’s argument could not be answered.

The leather edition deserves to be the canonical version. The trade hardcover and the ebook deserve to ship with the corrected text at the same time. Existing readers who have the first edition will own a first printing of a book that was, at the time of its publication, the most rigorous mathematical challenge ever posed to Neo-Darwinian theory. And new readers of the second edition will get an even stronger version of the argument with the most authoritative possible sources.

DISCUSS ON SG