Can you explain how the refutation of Kant affects our life today in simplified terms that anyone can understand?

Most people have never heard of Immanuel Kant, but almost everyone lives inside a concept he created. The idea sounds humble enough. Human reason can’t truly know reality as it is, only how it appears to us through our limited human senses. That sounds modest and even wise. But once you accept the idea as a real limitation, a strange thing follows. If nobody can actually know how things really are, then every statement about reality becomes just one more opinion, one more “perspective,” and none of those opinions or perspectives can ever be proven true. Refuting Kant’s idea and showing that it isn’t a rule or a real limitation is extremely significant because it puts the possibility of actual knowledge back on the table.

Here are five ways the refutation of Kant’s idea about unknowability changes your world.

First, expertise and “the science.” For decades, people were told to accept various statements because experts agreed or studies showed, and to treat the matter as settled and beyond any possibility of question. Kant’s rule props this up: if reason can’t reach reality directly, then truth becomes whatever the credentialed authorities say it is, because there’s no independent reality you can check them against. Refuting the rule restores the obvious: there is a real world, then those expert scientific predictions either come true or they don’t, and an expert who keeps being wrong is wrong no matter how many credentials he holds. You’re not dependent upon either the experts or the scientists; you’re allowed to check reality yourself.

Second, the idea that everyone has their own truth” This phrase is everywhere now, and it descends directly from Kant’s idea. If reality is locked away and we only ever see our own version of it, then your truth and my truth are just two filtered views and neither can be more correct than the other. Refuting the doctrine eliminates this. There is one reality. People can be honestly mistaken about it, and perspectives can be particually correct, but “true for me” stops being a relevant position. Some claims match reality and some don’t, and which is which is not up to how you feel about it.

Third, morality. If we can’t know how things really are, then we also can’t know how things really should be, and right and wrong collapse automatically into preference, culture, or power, with the strongest, loudest voice defining it. This is why so many moral arguments today end in “who are you to judge.” Refuting unknowability reopens the possibility that good and evil are real features of the world, discoverable like other truths, not just labels we stick on things we happen to like or dislike. That changes how seriously you can take a moral claim, your own included.

Fourth, science and discovery itself. Kant’s rule says human reason can’t identify anything about reality that isn’t already handed to us through experience. But that’s not how the greatest discoveries actually worked. The planet Neptune was found by pure calculation first: a mathematician worked out that something unseen had to be tugging on Uranus, predicted exactly where to point the telescope, and there it was. The same thing happened with antimatter, predicted on paper before anyone detected it. Reason reached out and grabbed a piece of reality nobody had experienced yet. If Kant’s rule were true, those triumphs couldn’t have happened. Refuting it explains why the human mind really can discover the world, not just sort the impressions it’s given.

Fifth, your ability to have confidence in your own thinking. The quiet cost of Kant’s rule is humility turned into paralysis: who am I to claim I know anything, when the smart position is that real knowledge is impossible? That mindset trains people to defer, to hedge, to assume the truth is forever out of reach and someone else’s call. Refuting the doctrine gives that back. Your reasoning is a real instrument that makes real contact with the real world. You can investigate, conclude, and stand on what you find. You will not be right about everything, and partial knowledge is still the human condition. But the door to truth was never locked and reality was never off limits. Kant just declared that it was, and a lot of people placed false trust in his assertions for two hundred and fifty years.

The refutation of Kant is therefore akin to a creature that thought it was a fish discovering that it’s simply been swimming in water this whole time, and realizing that not only can it breathe in the air and walk on the land too, but also that it has wings and can fly.

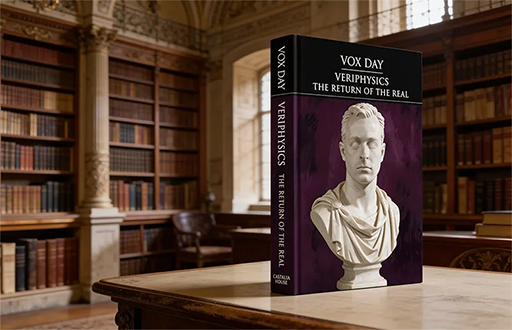

In related news, VERIPHYSICS: THE RETURN OF THE REAL is now available for preorder in hardcover and paperback editions from NDM Express. They should be available at Amazon, Barnes & Noble, and other bookstores next week. It contains both The Treatise, The Refutation, and the Agrippan Trilemma challenge.

DISCUSS ON SG